Overview

Watch

Read

Next Steps

Watch

Defining the null space and verifying vectors belong to it

📝 My Notes

Auto-saves the current timestamp

Defining the null space and verifying vectors belong to it

5:01

About this lesson

Null Space Overview:

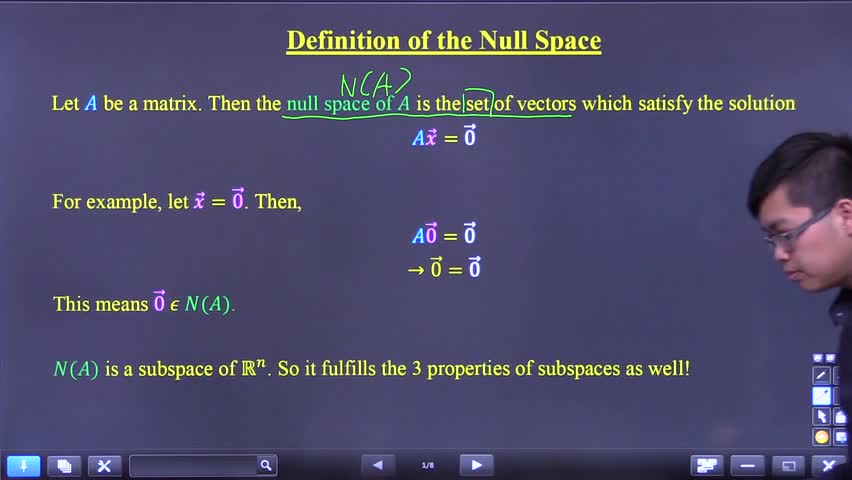

Definition of the Null space

• \(N(A) =\) null space

• A set of all vectors which satisfy the solution \(Ax=0\)

•

• \(N(A) =\) null space

• A set of all vectors which satisfy the solution \(Ax=0\)

•

Key Moments

No key moments available.

Video 1 of 8

Defining the null space and verifying vectors belong to it

5 min

• Selected

Checking if a vector belongs to the null space of a matrix

4 min

Finding a basis for the null space using reduced echelon form

7 min

Proving the null space is a subspace using three properties

9 min

Checking if a vector belongs to the null space of a matrix

5 min

Testing if a vector belongs to the null space of a matrix

3 min

Finding a basis for the null space of a 3x3 matrix

10 min

Finding a two-vector basis for the null space of a 2x3 matrix

9 min